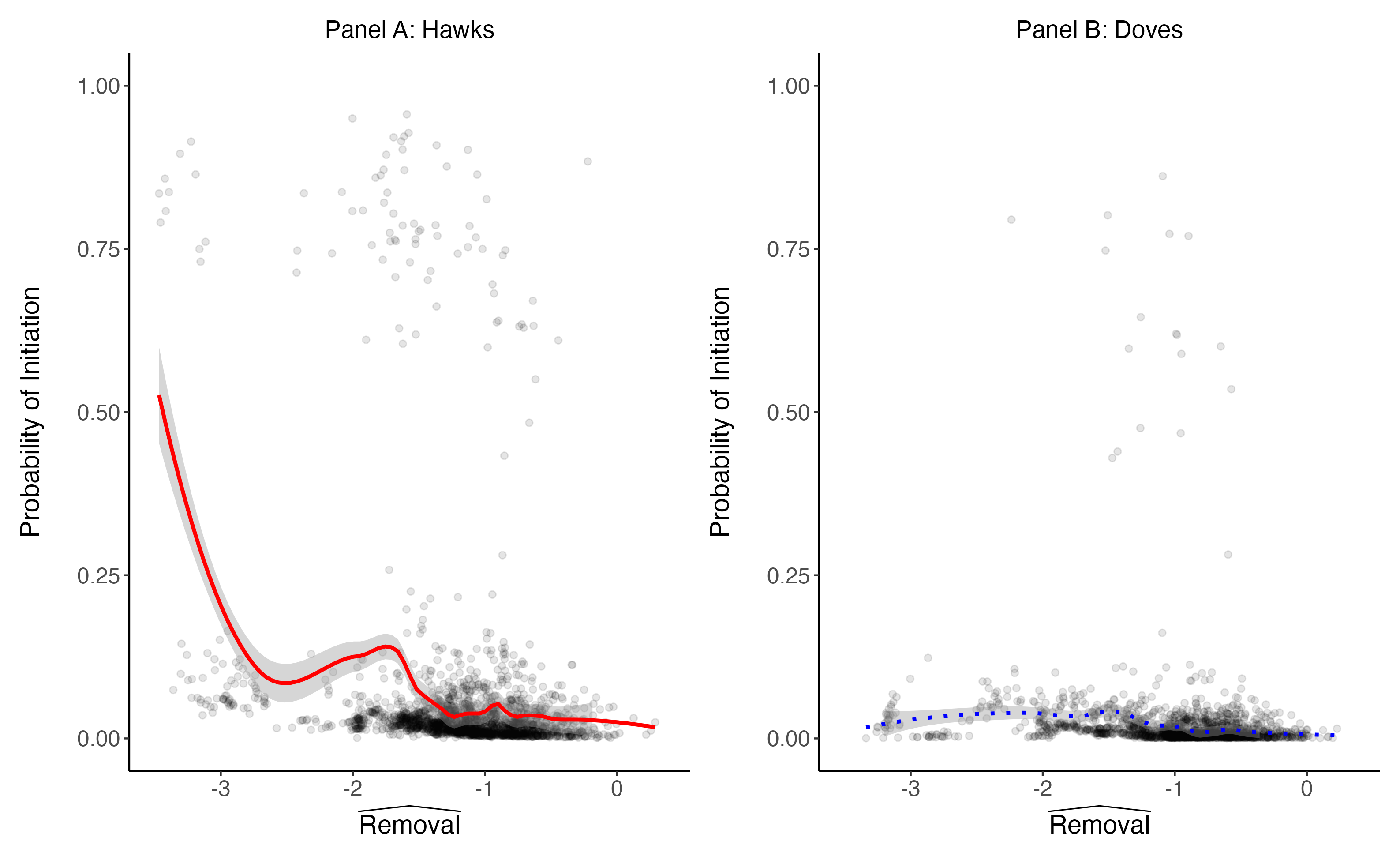

Do college football games influence U.S. elections? Healy, Malhotra, and Mo (2010) found that college football outcomes are correlated with election results. Fowler and Montagnes (2015) found that the estimated effects of college football games do not vary in the ways we would theoretically expect if the effect were genuine and that NFL games have no effect, suggesting that the original result was likely a chance false positive. Graham et al. (2022a) reevaluated the effect of college football games on elections by adding new data. Although the estimates weakened when a small amount of new data was added, they concluded that the evidence mostly supports the original finding. Fowler and Montagnes (2022) responded with simulations showing that the evidence in Graham et al. (2022a) is statistically consistent with the possibility that the original result was a chance false positive and statistically inconsistent with the possibility of a genuine result of the magnitude reported in the original paper. Graham et al. (2022b) have written a reply to Fowler Montagnes (2022), and as ridiculous as this all sounds, this is our reply to that reply.

Why run a plethora of tests?

Graham et al. (2022b) raise two disagreements with us. First, they argue that we ran a plethora of tests while they focused on a preferred specification. To be clear, we simply ran the same plethora of tests that they reported in Graham et al. (2022a). We did not add new specifications or hypotheses. But because there were so many different tests, we conducted global hypothesis tests across all specifications. We weighed all the specifications equally in these tests because Graham et al. (2018) did not pre-register any alternative way of weighing the tests. Graham et al. (2022b) claim that they did pre-specify a preferred approach, but the section of their pre-analysis plan from which they quote simply pre-specified their preferred approach to fixed effects, lags, and controls, which they utilize in all of the tests in question. Graham et al. (2022b) also argue that by running so many tests and weighing them equally, we “risk pushing theory into the background” (p. 2). But the tests that they now would like us to down weight, having seen the results, were described in their pre-analysis plan as “theoretically-relevant heterogeneous effects” (Graham et al. 2018, p. 12).

Should we prioritize out-of-sample data?

Second, while they prioritize estimates from the full, expanded sample of data, we prioritize estimates from the out-of-sample data. We agree that if this were the first time anyone was examining this data, it would make sense to use as much data as possible and prioritize the expanded sample. Similarly, if this were a meta-analysis where we just wanted to update our estimate, it would make sense to focus on the pooled data. However, the stated goal of Graham et al. (2022a) was independent replication. If we want to assess whether the original finding was a chance false positive, we need to focus on new, independent evidence. As the simulations in Fowler and Montagnes (2022) show, we would expect the pooled estimates to still be mostly supportive of the original hypothesis even if the original finding was a chance false positive because most of the data is from the previous study. So there is a clear reason to privilege the out-of-sample data.

Suppose that someone conducted an experiment with 1,000 observations and found a surprising, newsworthy, statistically significant result. Another team of researchers decided to reevaluate the hypothesis by collecting an additional 400 observations and also collecting a previously uncollected control variable for 200 observations in the original sample. They re-analyze the data, pooling the old and new observations, and although the results are weaker, they are still mostly in the direction of the original hypothesis. Would this evidence bolster your confidence in the original hypothesis? Would you call this a replication? Presumably, the answer to both questions is no, and the answer doesn’t depend on whether the study is experimental or observational.

What counts as out-of-sample data?

Belying their argument that we should not privilege out-of-sample data, Graham et al. (2022b) devote much of their reply to the question of what data should count as “out of sample.” Healy, Malhotra, and Mo (2010) analyze data from 1964-2006, but their specifications that control for betting odds only include data from 1985-2006. Graham et al. (2022a) analyze data from 1980-2016, and they have data on betting odds for their entire sample. Fowler and Montagnes (2022) classify data from 2007-2016 as out of sample, but Graham et al. (2022b) contend that 1980-1984 should also be classified as out of sample because Healy, Malhotra, and Mo (2010) never utilized data on betting odds for these years.

Our response to this argument is twofold. First, estimates that do and do not control for betting odds are not independent of one another. If noise caused the estimate without controlling to be an overestimate, the same noise will likely cause the estimate with controlling to be an overestimate. So as a matter of principle, data from 1980-1984 is not out-of-sample and does not offer much independent evidence. Second, Graham et al.’s pre-analysis plan (2018) reveals that they did not think of 1980-1984 as out-of-sample at the time they were planning their study. The pre-analysis plan specifically refers to 2008-2016 as “out-of-sample cases” (p. 4) while referring to 1980-1984 as “in-sample election cycles” (p. 5).

Concluding thoughts

Before seeing any evidence on college football and elections, most of us probably thought that a meaningful effect was, ex-ante, very unlikely. It’s not as ex-ante unlikely as extra-sensory perception (Galak et al. 2012), but it’s much less likely than most of the things we study in the social sciences. So although the statistically significant results from Healy, Malhotra, and Mo (2010) likely raised our beliefs about this possibility, we should have remained somewhat skeptical (Bueno de Mesquita and Fowler 2021, pp. 325-328; Fowler 2019). Additional evidence offered by Fowler and Montagnes (2015) and Graham et al. (2022a) was inconsistent with a genuine effect and consistent with the possibility that the original finding was a chance false positive. So in light of this new evidence, we should likely lower our beliefs, arguably below where we started before seeing any evidence.

Although the topic might seem like it doesn’t warrant so many studies and replies, there is much at stake. If voters are influenced by politically irrelevant events such as college football games, perhaps we should worry that they are influenced by hundreds of other politically irrelevant events, which could be bad for electoral accountability (although see Ashworth and Bueno de Mesquita 2014). Indeed, many scholars and commentators point to evidence that irrelevant events affect elections to support their argument that democracy is broken (e.g., Achen and Bartels 2016; Brennan 2017; Caplan 2011; Somin 2013). The debate over college football and elections is especially relevant for these normative debates because most of the purportedly irrelevant events that purportedly influence elections are not, in fact, politically irrelevant (Ashworth, Bueno de Mesquita, and Friedenberg 2018). But if someone wants to argue that voters irrationally respond to irrelevant events and therefore democracy performs poorly, perhaps their best argument is to point to studies claiming that college football affects elections.

Because the stakes are so high, we should care whether the claims and findings published in our journals are reliable. As a field, we have to weigh the benefits of discovering new, exciting phenomena against the risk of obtaining false-positive results. If we are willing to accept some risk of false positives (we shouldn’t be doing empirical social science if we aren’t), we must also be willing to consider new evidence and revise our beliefs in light of that new evidence. Ideally, that new evidence will be independent of the previous evidence that contributed to the originally published, newsworthy finding.

This blog piece is based on the articles “Irrelevant Events and Voting Behavior: Replications Using Principles from Open Science“,

and “How Should We Think about Replicating Observational Studies? A Reply to Fowler and Montagnes”

all available in the current issue of the Journal of Politics, Volume 85, Number 1.

The empirical analysis of these articles has been successfully replicated by the JOP. Data and supporting materials necessary to reproduce the numerical results in the articles are available in the JOP Dataverse.

About the authors

Anthony Fowler is Professor in the Harris School of Public Policy at the University of Chicago. His research applies econometric methods for causal inference to questions in political science, with particular emphasis on elections and representation. You can find more information on his research here.

Pablo Montagnes is Associate Professor in the Department of Political Science and the Department of Quantitative Theory and Methods at Emory University. His research applies formal theory and econometric models to questions about organizations, political institutions, and elections. You can find more information on his research here and follow him on Twitter: @pmontagnes